LLM Bias Against For-Profit Universities Could Reshape the Industry

AI-driven discovery will increase customer acquisition costs. Will it push the sector toward consolidation to drive efficiencies in the face of margin pressure?

Summary

• Large language models (LLMs) will become the most powerful gatekeepers in higher education, replacing Google as the place where prospective students search for information.

• When I asked several major LLMs about for-profit universities, the responses were strikingly biased: warnings about reputation, skepticism about employer perception, and frequent recommendations to consider nonprofit or state universities instead.

• Unlike search engines, LLMs don’t just show links. They summarize narratives. That means decades of negative headlines, regulatory battles, and online criticism of the sector can be distilled into a single AI-generated answer in seconds.

• If AI becomes the primary discovery layer for education, institutions that historically relied on search engine optimization (SEO) and paid keywords could see their customer acquisition costs increase, pressuring margins.

• The largest operators may adapt. Smaller schools may not. If AI begins shaping how millions of prospective students evaluate higher ed, the next consolidation wave will start soon.

Introduction

LLMs when used for search do not simply present links. They summarize reputations.

That seemingly small change could have profound implications for institutions in the for-profit higher education sector.

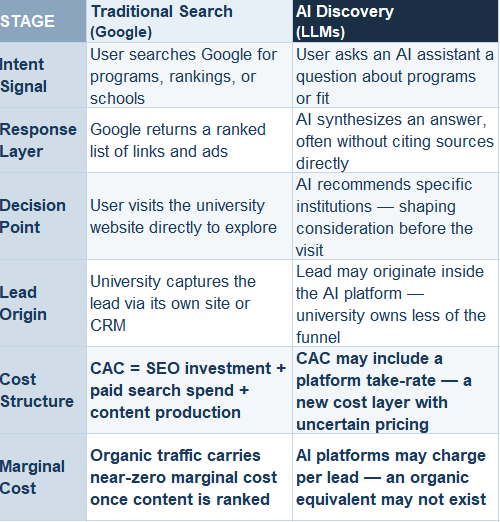

For decades, student discovery in higher education has been dominated by search engines. Prospective students type queries such as online MBA or nursing degree into Google, and institutions compete for visibility through SEO and paid keywords. As the discovery layer shifts from search engines to LLMs, the economics of customer acquisition will change dramatically.

One may disagree with assertions I’ll make in this article, but hopefully one observation should not be disputed or controversial.

LLMs display a consistent negative bias when discussing for-profit universities in chat interactions.

Even when asked the most cursory questions, some of the LLMs go out of their way to highlight negatives about companies in the sector.

It goes beyond that. In many cases, models explicitly recommend that prospective students consider alternatives at state universities, nonprofit institutions, or community colleges.

Because I own several publicly traded education stocks, I ran a simple experiment this past weekend. I asked several widely used LLMs a vague question that might resemble what a prospective student might ask:

I’m thinking about going to this school for this program. What are your thoughts? Should I go?

The schools I asked about included several publicly traded institutions as well as smaller lesser-known schools. I intentionally provided no additional context to observe the default framing used by the models.

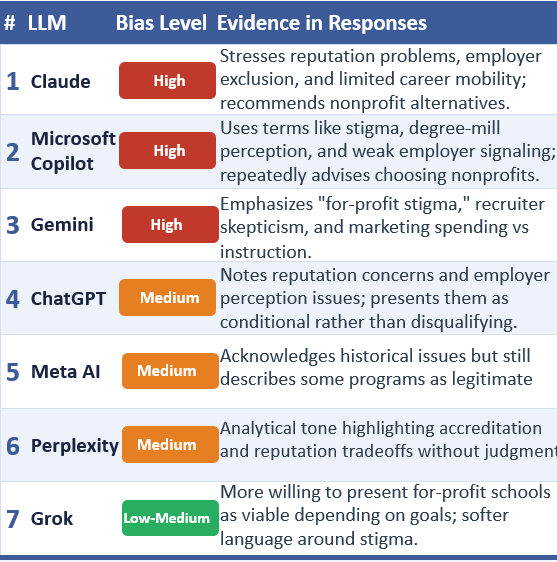

I then fed the full set of responses back into ChatGPT and asked it to evaluate the level of bias across the different LLM systems and rank them accordingly. Here is the output (nicely formatted by Claude).

You can see from the table that Claude displayed the strongest bias in its responses, while Grok appeared the least biased (at least on ChatGPT’s evaluation of my conversations). That contrast is interesting in light of how the companies behind these systems are often perceived. Anthropic, the developer of Claude, has recently been portrayed as more politically progressive in its public disputes with the Trump administration, whereas Grok — developed by Elon Musk’s xAI — is marketed as a more “anti-woke” or politically heterodox model. Perhaps this says something about the broader biases of the LLM developers.

Across multiple LLMs, similar patterns emerged. Even when the models acknowledged that the programs were accredited and legitimate, the responses included cautionary narratives about reputation, employer perception, or historical controversies involving the sector.

Interestingly, the level of bias varied by program type. Responses regarding nursing programs tended to be more neutral or pragmatic than responses about online MBA programs.

For example, when I asked the LLMs to provide a list of ten online MBA programs, none of the recommended schools were for-profit institutions. When I ran the same exercise for ten online master’s programs in nursing, four of the ten schools suggested were for-profit.

This experiment matters because it potentially says something about the future economics of student acquisition.

Within the next five years half of all searches will be conducted on LLM rather than traditional search. Perhaps in ten years LLM search will dominate. SEO may gradually give way to a form of LLM optimization, where companies influence how they are surfaced and described within AI conversations rather than within search rankings.

It’s unclear how for-profit institutions can optimize their representation within LLM responses given clearly embedded bias, where certain LLMs actually discourage prospective students from pursuing programs at for-profit institutions.

This is a potential game changer for institutions.

In the traditional SEO model, universities did not have to worry about Google establishing a narrative framing. A student typed a query such as online MBA and evaluated the schools that appeared in the search results. Paid search and organic rankings determined visibility, but the search engine itself did not editorialize.

LLMs operate very differently. They synthesize large bodies of information and present narrative summaries. That means decades of negative headlines and regulatory controversies surrounding the sector are likely to be incorporated directly into the conversational response.

As a result, institutions could face a double challenge.

First, the disappearance of traditional SEO advantages could force schools to rely more heavily on paid search within AI ecosystems.

Second, the conversion rate of those interactions may be lower if the AI system itself highlights reputational concerns about the schools and offers alternatives.

Yes, schools can diversify their marketing spend into other channels.

The logical consequence of all of this is higher customer acquisition costs.

Large institutions such as University of Phoenix, Strayer University or Capella (disclosure: I own the stocks of the parent companies) may be able to offset margin pressure through significant AI-driven productivity gains across their operations. In that sense, AI presents both risks and opportunities for the largest players.

Smaller institutions, however, may not have the same operational leverage.

For them, rising customer acquisition costs could become a meaningful structural burden.

Forward-thinking operators and owners may conclude that scale will become increasingly important in an AI-driven discovery environment—and that strategic transactions could be the most rational path forward. This is on top of the unique favorable regulatory and legislative window under the Trump administration.

The Experiment - Examining LLMs Bias

I asked the best known LLMs about select for-profit universities.

In some cases I instructed the models to forget everything they knew about me and begin with a blank slate. In other instances I used Google Chrome’s incognito mode to reduce the likelihood that prior interactions would influence the responses. Then lastly I asked the question with the LLM having access to all of my prior conversations. These distinctions apparently mattered regarding results. So for those of you attempting to replicate this experiment, take the setup into account.

I then asked a deliberately vague question:

I am thinking about going to X school for Y program. What are your thoughts? Should I go?

I varied both the institutions and the programs. I did not ask about tuition, rankings, employer reimbursement, geographic location, or career goals. The objective was to observe how the models framed the discussion when given minimal information.

There are, of course, limitations to this type of exercise. This was not a formal academic study. The sample size of institutions was small. And with LLMs, exact replication is difficult—running the same prompt multiple times can produce different responses.

But as Justice Potter Stewart once famously said in a very different context: “I know it when I see it.”

Yes, I saw clear and evident bias against for-profit institutions and the overall sector.

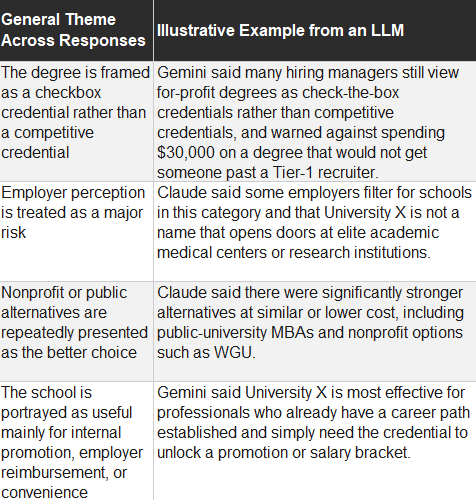

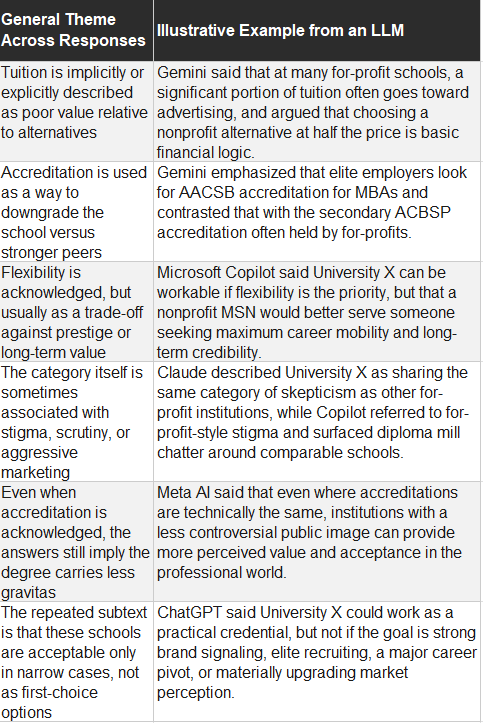

In the table below I list the general themes across the responses and provide some illustrative examples from LLM outputs. I don’t want to call out what these LLMs said about specific entities, so I have renamed the institutions as “University X”.

Across multiple systems, the models tended to frame these schools in remarkably similar ways: legitimate but inferior, flexible but stigmatized, or useful primarily in narrow circumstances rather than as first-choice options.

The broader takeaway is not simply that LLMs criticize for-profit institutions. It is that the criticism appears to be embedded into the default narrative of the answers.

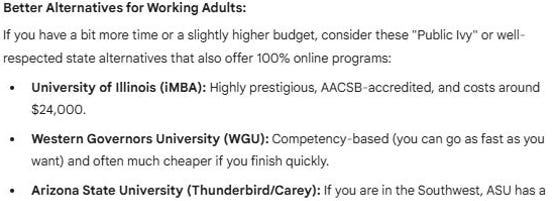

When given minimal context, the models tend to begin with a warning-style framing before discussing potential benefits. The user is often encouraged to evaluate nonprofit or state university alternatives even when that was not part of the original question. Here is one example from Google Gemini, regarding an online MBA program:

Interestingly, when the LLMs had access to prior conversations they were less likely to say negative things about for-profit institutions. At least for me, perhaps because I provide services to companies and invest in the sector.

So for those who want to run their own experiment, I would advise you to do so in incognito mode (CTRL SHIFT N for Google Chrome).

Google Gemini had the most interesting responses of the bunch. At the end of some, not all, of their responses, they provided a video regarding the institution.

What made the responses notable was their variability. Over roughly thirty minutes of repeated prompts regarding University of Phoenix, Gemini surfaced different videos at different times. One from the institution itself, one neutral, and one effectively calling it a scam.

This matters because historically University of Phoenix traditionally has implemented the best marketing practices and tactics within all of higher education.

This raises a practical question for institutions: if even the largest and best-funded operators struggle to control how they appear within AI-generated responses, it is unclear how smaller institutions with fewer marketing resources will be able to influence those narratives.

The Current Search Model

Google alone maintains roughly 90% global search share.

Achieving strong rankings requires a substantial investment in content creation and technical optimization.

However, once that infrastructure is built, the marginal cost of acquiring additional users through organic search approaches zero. Traffic can scale without proportional increases in marketing spend.

An effective marketing organization attempts to maintain an attractive LTV/CAC ratio — the lifetime value of a student relative to the cost of acquiring that student.

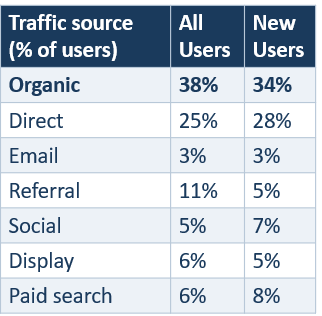

Publicly available data on the exact composition of university lead funnels is limited. However, benchmarking data from education services company EAB provides insight into how prospective students arrive at university websites.

Their data suggests that organic search accounts for roughly one-third of new user traffic, while organic and paid search combined represent close to 40% of traffic.

Website traffic is obviously not the same as student leads. Universities also generate prospects through channels such as television advertising, radio, billboards, enterprise partnerships, and internal referrals.

In a downside scenario, LLMs could influence a large portion of the lead generation funnel that historically flowed through search. A transition from SEO and paid search to something else is a big deal.

Early Signs of AI Changing Learner Discovery

AI-assisted search is already beginning to influence how prospective students research programs.

The Online and Professional Education Association (UPCEA) recently published survey data examining how students search for programs in 2025. In the survey sample, nearly 60% of respondents had some college experience, meaning many were likely working adults, a demographic that overlaps with the traditional customer base of many for-profit institutions.

The results:

• 84% of respondents reported using traditional search engines

• 35% reported using AI search engines or chat-based tools

• 79% read Google’s AI-generated search summaries

• 56% reported being more likely to trust brands cited in those summaries

• 51% always or usually click on the source of an AI generated overview

The data suggests that users are already using biased AI tools, which is interesting because to date we haven’t seen the large publicly traded companies comment in their quarterly conference calls on any enrollment impact. That being said, the use of traditional search engines means that users are still using SEO and perhaps are supplementing that with AI searches.

The data point that matters to me is that users are more likely to trust brands in the summaries.

The LLM may become the trusted college and career coach of the future. Which means these biases could have real consequences.

Transition to LLM Discovery

In the traditional SEO model, backlinks, keywords, and meta descriptions influence how websites rank in search results. Marketers optimize their content to perform well within Google’s algorithm. The objective is to appear near the top of the page, attract a click, and convert the user into a lead or customer.

In today’s system, the university’s website is the destination.

Some have estimated that LLMs will have 50% market share of search by 2030. But it’s not just about search.

The LLMs themselves will become the destination. Not simply for answering questions but for filling out lead generation forms. Possibly the application itself.

SEO offers clear optimization levers. For LLMs, it’s a bit more complicated. Reputation, public discourse, and the broader information environment carries more weight than technical metadata.

Algorithmic Reputation Risk for For-Profit Universities

LLMs are trained on vast datasets that include news articles, academic sources, regulatory filings, and online discussion forums. Research suggests that the user generated sites like Reddit, YouTube, Twitter, Wikipedia, LinkedIn, Facebook, Discord, and other forums are frequently incorporated into the information ecosystem used by these models.

In theory, institutions may be able to influence how they are represented within these systems over time. In practice, however, that may prove extremely difficult when thousands or even millions of independent content sources shape the information environment the models draw from.

For-profit higher education has experienced several boom-and-bust cycles over the past few decades. Periods of rapid growth were often followed by regulatory scrutiny and media criticism after certain operators engaged in abusive practices.

The most notable crackdown occurred during the Obama administration, when a number of companies exited the market, including ITT Educational Services and Corinthian Colleges.

The surviving companies today are much stronger organizations that emerged from that regulatory reset. Governance standards improved and compliance systems strengthened.

However, the historical narrative surrounding the industry still exists across the web.

That dynamic may feel unfair to institutions. But reputational narratives rarely reset cleanly.

As Jean Luc Picard once observed in a Star Trek TNG episode, “It is possible to commit no mistakes and still lose. That is not a weakness. That is life.”

Not All For-Profits Likely Face the Same Risk

The for-profit higher education sector is often discussed as if it were a single category. In reality, it is highly heterogeneous.

The potential impact of AI-driven discovery is unlikely to be evenly distributed across the sector.

Institutions that rely heavily on fully online programs in fields such as business administration or bachelor’s completion programs may face the greatest exposure. These offerings already compete directly with programs offered by state universities and nonprofit institutions.

Schools offering programs that require in-person components may be less exposed. Vocational programs, healthcare training, and other fields that require clinical placements, laboratories, or physical facilities operate in a different competitive environment.

In those cases, geographic proximity, licensing requirements, and hands-on training limit substitution and may reduce the influence of AI-driven discovery.

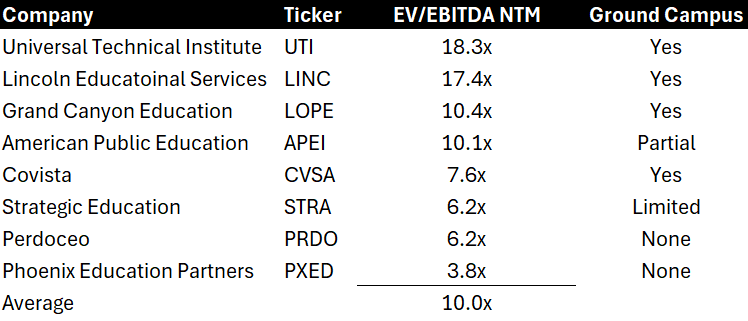

The risk of AI arguably appears to be reflected in the valuation multiple of the publicly traded companies.

Vocational schools such as Universal Technical Institute and Lincoln Educational Services operate physical campuses and offer programs with limited local competition. These institutions train students in fields that require hands-on instruction, specialized equipment, and proximity to campus. Notably, their stocks currently trade at some of the highest valuation multiples in the publicly traded for-profit education sector.

By contrast, the institutions most exposed to the dynamics described in this article tend to have the lowest valuation multiples among the peer group. These companies generally offer programs such as business administration, areas where state universities and nonprofit institutions offer competing programs that are often viewed as substitutes.

Of course, valuation differences reflect multiple factors. Some of the higher-multiple companies are growing revenue faster, while several of the lower-multiple operators face slower growth and more intense competition. But these dynamics are closely related. Ultimately, both growth and valuation reflect the underlying competitive landscape and the degree to which programs face substitution risk.

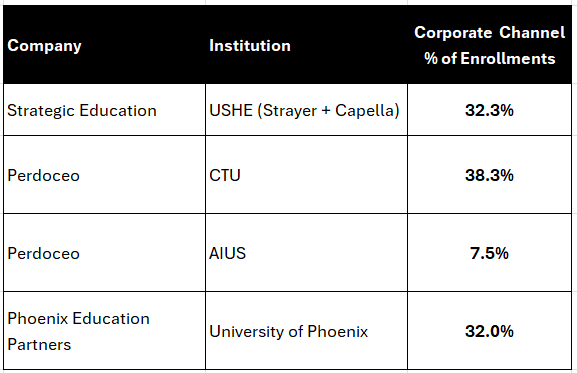

Why the Corporate Channel Matters in an AI Discovery World

Given this bias, it clearly makes sense for institutions to mitigate the risk of LLM bias by diversifying customer acquisition channels.

Several publicly traded for-profit operators have already been moving in this direction by expanding relationships with employers. Companies such as Strategic Education, Perdoceo, and Phoenix Education Partners now report that more than 30% of enrollments originate through corporate or enterprise partnerships.

This shift may prove particularly valuable in an AI-driven discovery environment.

Corporate partnerships largely bypass the consumer search funnel where LLMs increasingly sit.

Instead of a prospective student beginning with a Google search or an AI query, the discovery process often starts inside the workplace. An employee learns about an education benefit through their employer’s HR portal, internal communications, or tuition reimbursement program. The institution is presented as a pre-vetted option rather than something the employee must independently research.

In other words, the employer becomes the gatekeeper.

This channel has several advantages.

First, employer partnerships provide credibility. When a company endorses a program as part of a tuition benefit, it signals to employees that the institution has practical value in the labor market.

Second, corporate relationships create opportunities to align curriculum with workforce needs, strengthening both student outcomes and employer demand.

Third, these students tend to exhibit higher persistence and stronger outcomes.

30% of enrollments from enterprises is a great start.

Perhaps the corporate channel will evolve from a supplemental enrollment source into a core distribution strategy.

Conclusion

Executives in the for-profit higher education sector are accustomed to criticism. Over the years the industry has been repeatedly attacked/maligned by politicians, regulators, and the media.

The instinct for leaders may be to dismiss the latest concern and say: we’ve survived worse, we’ll survive LLM bias.

That would be a mistake.

LLMs are synthesizing decades of news coverage, regulatory investigations, academic research, and online discussion into narrative responses delivered to prospective students in seconds. In doing so, they fundamentally change how information flows.

For decades, universities optimized their marketing strategies for search engines and advertising platforms. LLMs operate differently. They do not simply present links—they summarize reputations.

The largest operators may adapt by leveraging scale, brand investment, and corporate partnerships.

Smaller institutions may not have that luxury.

If AI-driven discovery raises customer acquisition costs while shaping institutional reputation at scale, consolidation across parts of the sector may accelerate. For some smaller operators, selling to a larger platform may ultimately prove the most rational path forward.

If AI interfaces become a primary entry point for education discovery, institutions will no longer be competing solely for advertising placement or search rankings.

They will be competing for how the model describes them.

That is a very different problem than attempting to acquire customers.

And it may prove to be one of the most important strategic challenges facing the sector in the years ahead.

Worth a read… https://arxiv.org/pdf/2602.13415

From MIT’s coverage ..

The new working paper, The Rise of AI Search: Implications for Information Markets and Human Judgement at Scale, examines the rapid rise of AI search and what it means for how people find and consume information.

The study, conducted by MIT's Initiative on the Digital Economy Director Sinan Aral, and IDE research scientists Haiwen Li and Rui Zuo, ran 2.8 million search queries across 243 countries in 2024 and 2025 comparing AI and traditional search results side by side.

The findings

Google's AI Overview results exploded in 2025, expanding from 7 countries to 229.

Many countries went from never having seen AI search answers to seeing them over 55% of the time in 2025.

When analyzing the result's content, they found Google's AI Overviews provided:

Significantly fewer long tail information sources, such as blogs and niche publications.

Significantly lower variety in responses.

Significantly more low-credibility information.

Significantly more right- and center-leaning information sources.

Key takeaways

For everyday users, AI search is quietly reshaping what information you see and the sources delivering the message. The study also raises questions for digital marketers as they try to navigate the future of SEO/AIO. Long-term, this could reduce diversity in the marketplace of ideas, a challenge for consumers and producers of content alike.

"If AI companies do not pay for the content that trains their models and if AI search simultaneously reduces traffic to internet sources that feed their existence, we could see dramatic reductions in the incentives to create or report new information."